Technical

Causal Discovery: From Correlation to Mechanism

Time-series data invite models that predict the next step and claim understanding. In physical, biological, and engineered systems, however, the real scientific target is dynamical: how states evolve, which pathways mediate that evolution, and how interventions ripple through coupled variables. Correlation-rich predictors can hide spurious linkage inside smooth error reduction. CDE is built for teams that need mechanism-level claims that remain coherent when the world pushes back.

Prediction and mechanism are different objectives. Conflating them produces models that look good in retrospect but offer no guidance when you need to intervene or explain behavior to a regulator. CDE targets mechanism-level understanding that supports intervention reasoning.

The practical stakes are high. In process engineering, a predictive model that misses causal structure can suggest interventions with no effect — or worse, unintended downstream consequences. In drug development, a correlation mistaken for causation can misdirect clinical investment. These are routine consequences of applying predictive tools to causal questions without architectural safeguards.

Separating dynamics from causal structure

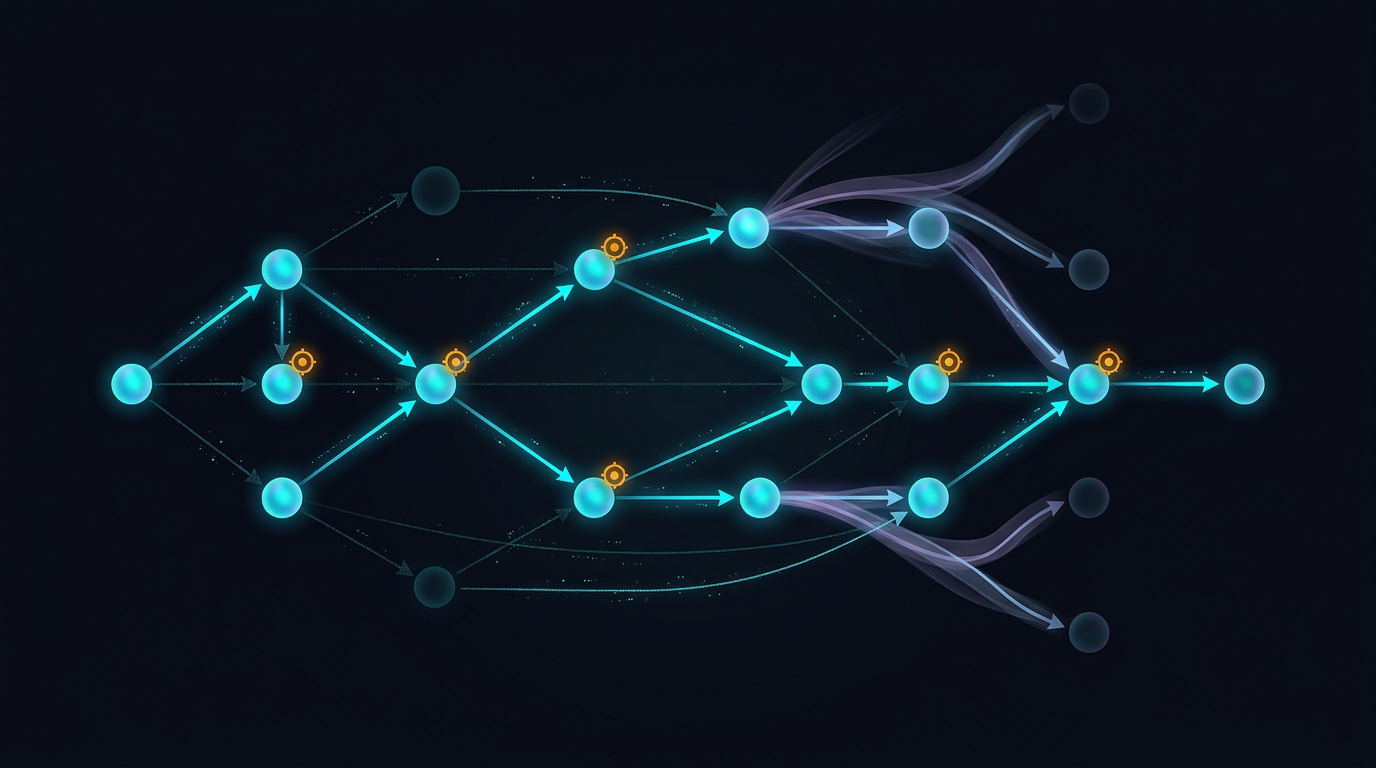

CDE maintains separate representations for continuous dynamics and causal structure. Many systems evolve smoothly in state space even when their causal skeleton is sparse and interpretable. Forcing a single model to handle both smears mechanism into undifferentiated nonlinearity. Separating these concerns makes failures diagnosable.

Why separation matters

A single model asked to learn both smooth dynamics and sparse causal structure faces a fundamental tension. Continuous evolution encourages dense connectivity. But real causal structure is typically sparse: most variables do not directly influence most others, and the edges that exist carry specific mechanistic meaning. Separate representations let each component optimize for its natural objective — tracking state evolution with high fidelity on one side, enforcing structural sparsity for causal queries on the other.

CDE treats interventions, identifiability, and path-level behavior as first-class outputs — not post-hoc interpretations layered onto a predictive model.

Active probing when passive data underdetermines mechanism

Observational variation does not always excite every edge of a causal graph. Some directions simply never move in natural data. CDE includes active investigation primitives that propose targeted probes — in simulation or where real interventions are feasible — to disambiguate competing explanations under budget constraints. The engine buys bits of identifiability the dataset could not provide passively.

Probe design under constraints

Active probing is not unlimited experimentation. In most settings, interventions are expensive or ethically constrained. CDE uses budget-aware probe design: it identifies which interventions would resolve ambiguous causal edges, ranks them by expected identifiability gain, and proposes a sequence that respects practical constraints. In simulation environments, probes execute directly. In physical systems, proposed probes inform experimental design decisions made by domain experts.

Edge fusion and conservative graphs

Learned graphs are tempting to read as ground truth. Gradient-based discovery can entangle convenience with reality. Edge ablation fusion stresses candidate influences by comparing dynamics under selective suppressions and integrating evidence across regimes. Links survive because they remain explanatory under perturbation, not because they won a single loss minimum on a narrow bundle of trajectories.

Conservatism as a scientific virtue

CDE's conservative bias in graph construction is deliberate. In causal discovery, false positives are more dangerous than false negatives: a spurious edge can misdirect interventions, waste experimental budgets, or undermine regulatory submissions. CDE reports fewer edges with higher confidence rather than producing dense graphs with speculative connections. Practitioners who need broader exploration can adjust sensitivity through policy settings, but the default is conservative — in causal reasoning, what you claim not to know matters as much as what you claim to have found.

Typed outputs for scientific disagreement

CDE emits structured claims: statements about identifiability under observed excitation, path-level dynamical summaries, and out-of-distribution response expectations. Those claim types let two teams disagree productively — on architecture, on priors, on data limits — while negotiating whether their intervention predictions align.

On demanding physical domains such as ITER-scale tokamak confinement scaling, CDE-style discovery has demonstrated path fidelity sufficient for domain experts to treat the dynamical tracking as credible. Your dataset may yield different numbers; the point is architectural: when dual-field modeling, probing, and conservative edge scrutiny come together, causal dynamics becomes an engineering artifact.

Claim types and scientific discourse

Typed outputs facilitate structured scientific disagreement. When two teams produce conflicting causal claims, claim types provide a common vocabulary. Is the disagreement about identifiability — one team had more excitation? About graph structure — one team found an edge the other did not? About intervention predictions — both agree on structure but differ on magnitude? Each disagreement type has a different resolution path, and typed claims route the conversation accordingly.

Validation across domains

Validating causal claims is harder than validating predictive models. A predictive model can be tested against held-out data; a causal model must be tested against intervention outcomes, counterfactual consistency, and structural stability under regime change. CDE's validation framework addresses each dimension: testing intervention responses against observed outcomes, checking structural stability under data perturbation, and reporting identifiability diagnostics that indicate which edges are well-determined and which remain ambiguous.

Validation operates throughout the discovery process, providing early warnings when claims rest on thin evidence and flagging edges whose status changes as more data arrive. A claim well-supported under early data may become ambiguous when later observations reveal unseen confounders. CDE surfaces those shifts rather than hiding them behind a static confidence score.

How the Causal mode fits the rest of CDE

Causal mode participates in the same promotion gates, emits typed claims compatible with ledger semantics, and respects Truth Dial tiers like every other mode. Real programs rarely arrive as pure causal questions — they arrive as messy bundles where some variables are smooth fields, some relationships are nearly algebraic, and some hypotheses are fundamentally about mechanism. CDE routes honestly among modes rather than forcing a single aesthetic.

Composing causal and non-causal results

A research program may use symbolic mode to discover a conservation law, neural mode to represent a complex boundary condition, and Causal mode to establish the pathway connecting an upstream variable to a downstream outcome. These results compose because they share typed-claim infrastructure and the evidence ledger. A conservation law from symbolic mode can constrain the state space that Causal mode explores. A neural surrogate can provide fast forward simulation while the causal graph provides structural explanation. That composability comes from designing all four modes around a common scientific contract.

For leadership teams, the operational implication is risk management. Causal claims are the ones most likely to inform expensive interventions — clinical, operational, or engineering — so they deserve the strongest evidentiary discipline.

CDE embodies a specific architectural commitment: causal reasoning in dynamic systems deserves its own computational infrastructure, not a post-hoc interpretation layer on predictive models. For teams working where interventions carry real consequences — process engineering, drug development, energy systems, climate modeling — that commitment translates into better-informed decisions, more defensible regulatory submissions, and a scientific record future teams can build on.

Causal Dynamics Engine (CDE) and MatterSpace are patent pending in the United States and other countries. Vareon, Inc.